Claude (Opus 4.7) vs ChatGPT (5.5) with Hospitable MCP — Real Use Case

I wanted to properly test Claude Opus 4.7 vs ChatGPT using the Hospitable MCP in a real scenario—not benchmarks, but something actually useful for hosting.

The Question

Where do my guests actually come from, and how has that changed over the past ~5 years?

We operate in Tbilisi, so this isn’t just curiosity—regional shifts matter a lot given everything going on globally.

Setup

-

MCP setup was easy in both tools

-

Same prompt used in both (written manually)

-

No special tuning or iteration upfront

The Prompt I Used

“Can you visualize over time since the very beginning of each listing (grouped is fine) where our guests have come from? I want to see a changing or constant ‘demographic’. Watch out though, the location is not normalized that comes back from Hospitable / Airbnb. Codex had a lot of trouble with this. So look at the data then think it through first!”

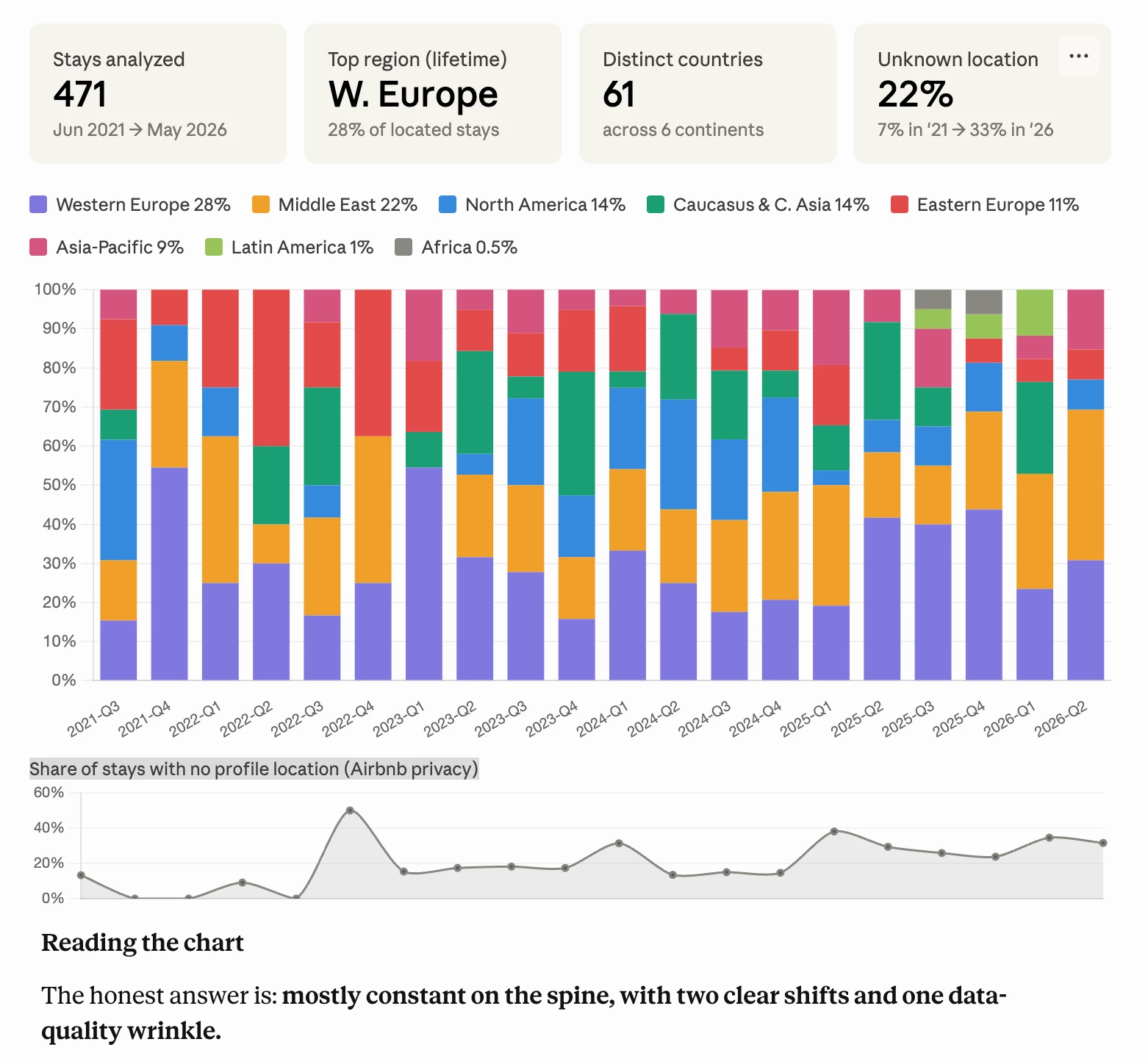

Claude Experience

Claude basically just ran with it. The first response already included:

-

Clean chart

-

Clear breakdown

-

Actual insights (not just description)

What stood out most:

It didn’t just analyze — it challenged the dataset.

It flagged gaps, suggested ways to improve the analysis, and then executed on that idea in the next run.

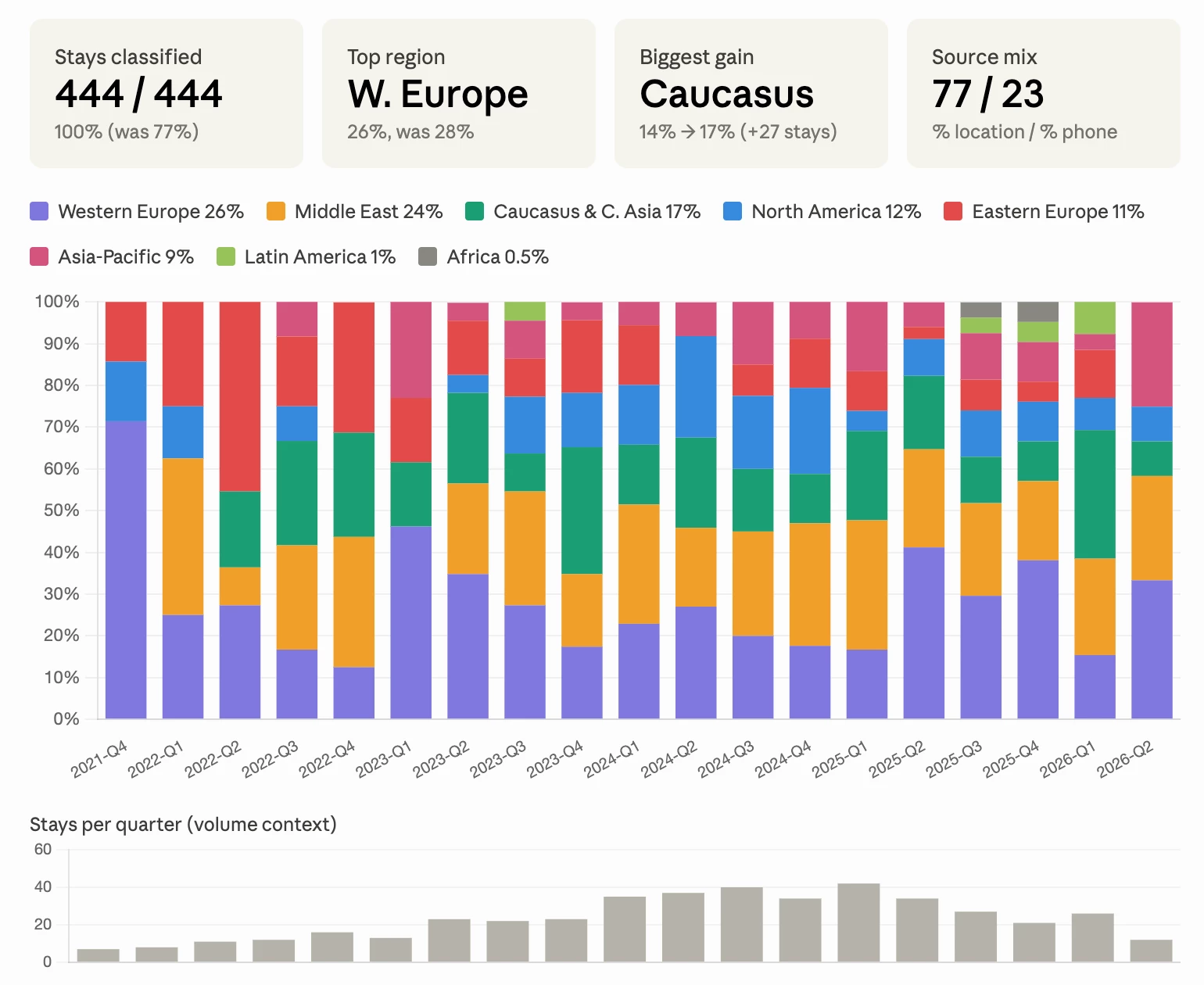

Second run:

-

Stronger dataset coverage

-

Updated visuals (after asking)

-

More confident, real-world insights

It felt like:

“Here’s what your data says—and here’s what it actually means.”

Downside:

It burned through usage fast (2 runs + a few follow-ups and I was basically done but so was my usage for the next 3 hours!).

ChatGPT Experience

With the same prompt, ChatGPT took a more structured, step-by-step approach.

-

It correctly identified the messy data

-

But instead of acting immediately, it:

-

Explained the issue in detail

-

Suggested prompts to run

-

Waited for me to drive

-

From there, the workflow became:

-

Run suggested prompt

-

Get results + long explanations

-

Get another suggested prompt

-

Repeat

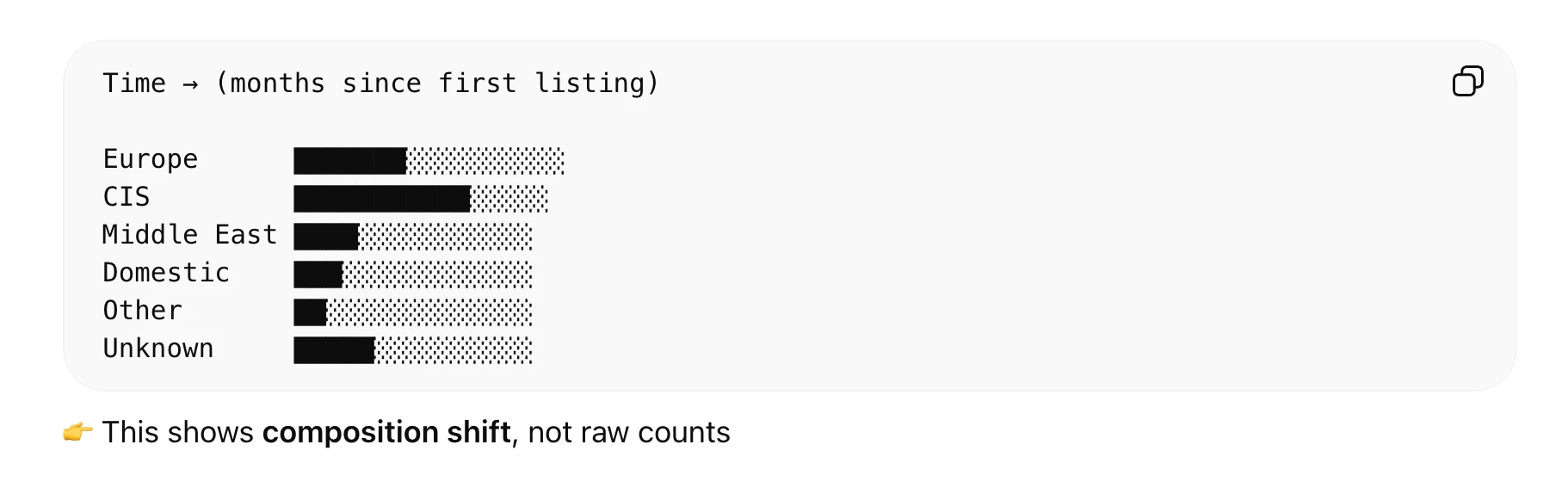

Outputs included:

-

Dashboards (a bit “retro” in feel)

-

Lots of bullet-point insights

-

Heavy focus on methodology rather than conclusions

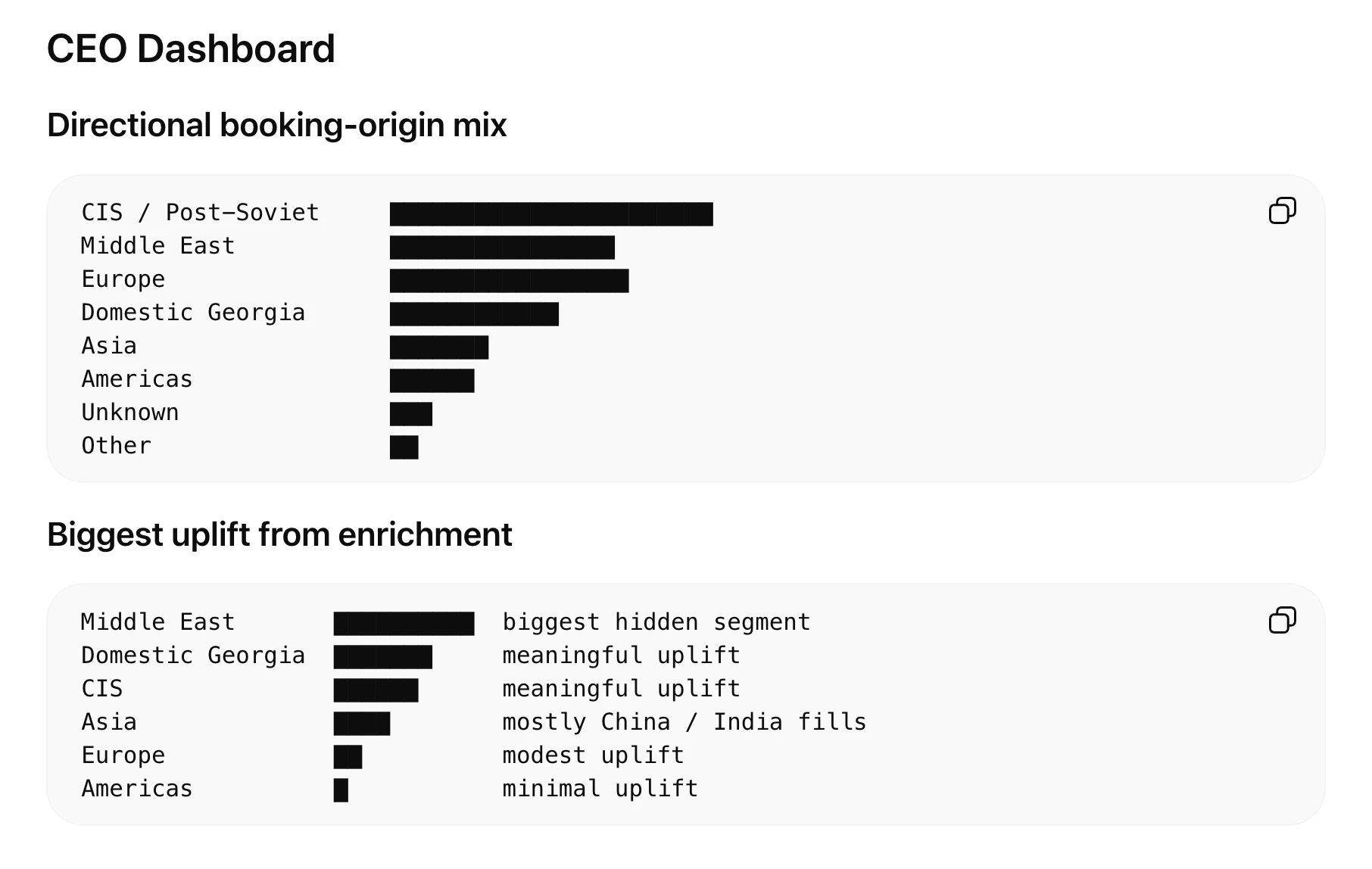

Even when I asked for a CEO-style summary, it still mixed:

-

Final insights

-

With explanations of how it got there

It kept suggesting next steps like:

“Monthly booking share by region (with vs without enrichment)”

Each step required manual prompting and steering.

The Real Difference

This wasn’t about which model is “better”—it’s about how they think. Wait who wrote this? ChatGPT. It really is about which model is better.

Claude

-

Proactive

-

Makes decisions

-

Improves the analysis independently

-

Focuses on insight

ChatGPT

-

Guided

-

Waits for direction

-

Suggests frameworks and workflows

-

Focuses on process

What This Means for Hosts

If you're using MCP with Hospitable:

Claude is great when you want good answers quickly

→ “Tell me what’s going on in my data”

ChatGPT is is off the hook here

→ “Help me build this analysis step by step and make me guide you through every single step”

My Takeaway

For this kind of messy, real-world question:

Claude got me to meaningful insights faster and with less effort

ChatGPT almost got there too—but needed much more steering

One Practical Lesson

If the underlying data is messy (which it often is in Hospitable/Airbnb exports), the model’s ability to interpret and adapt becomes the real differentiator.

Curious if others here have tested MCP with both—are you seeing the same pattern, or different results?

PS: Slightly ironic footnote—this post was polished with ChatGPT… because I had already burned through my Claude usage 😄